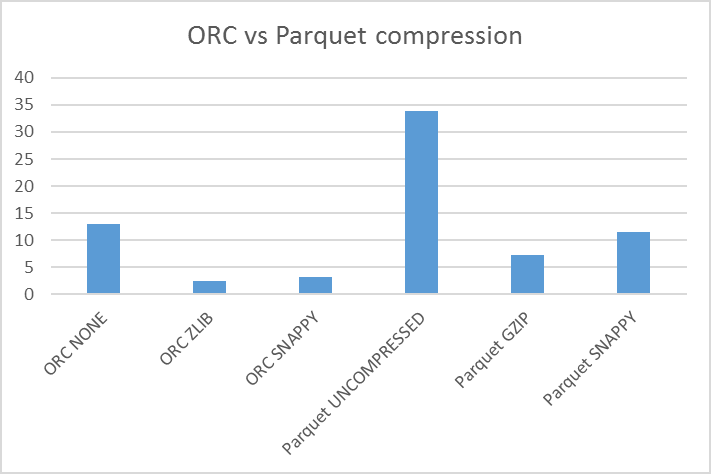

You need just the right level of parallelism.Apache Ambari is a web interface to manage and monitor HDInsight clusters. Higher values result in fewer reducers being launched which can also degrade performance. If .per.reducer is set to a very high value then you will have fewer reducers than if set to a lower value.When I set this to 64 MB, then Hive launched the 20 reducers with each file being around 46 MB. Hive launched 10 reducers which is about 92 MB per reducer file. In this example the total size of the output files is 920 MB. 128 MB is an approximation for each reducer output when setting .per.reducer.If I did not set .per.reducer, then Hive would have launched 20 reducers, because my query output would have allowed for this.Since I set a value of 128 MB for .per.reducer, Hive will try to fit the reducer output into files that are come close to 128 MB each and not just run 20 reducers.My job completed with only 10 reducers - 10 output files. This is just a guess on my part and Hive will not necessarily enforce this. The parameter .factor is telling Hive to launch up to 20 reducers.With the above settings, we are basically telling Hive an approximate maximum number of reducers to run with the caveat that the size for each reduce output should be restricted to 128 MB. This is inefficient as I explained earlier. The first run produced 73 output files with each file being around 12.5 MB in size. If you have too many partitions and/or columns, this could degrade performance. Partition and column statistics from fetched from the metastsore. Enable the Cost Based Optimizer (COB) for efficient query execution based on cost and fetch table statistics:.Vectorized query execution processes data in batches of 1024 rows instead of one by one:.Enable predicate pushdown (PPD) to filter at the storage layer:.You can easily drop partitions that are no longer needed or for which data has to be reprocessed. Partition your tables by date if you are storing a high volume of data per day.Run the Hive query with the following settings:.Schedule an automated ETL job to run at certain times:ĪNALYZE TABLE page_views_orc COMPUTE STATISTICS FOR COLUMNS

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed